Episode Description

Subtitle: If you think reward-seekers are plausible, you should also think “fitness-seekers” are plausible. But their risks aren’t the same..

The AI safety community often emphasizes reward-seeking as a central case of a misaligned AI alongside scheming (e.g., Cotra's sycophant vs schemer, Carlsmith's terminal vs instrumental training-gamer). We are also starting to see signs of reward-seeking-like motivations.

But I think insufficient care has gone into delineating this category. If you were to focus on AIs who care about reward in particular[1], you’d be missing some comparably-or-more plausible nearby motivations that make the picture of risk notably more complex.

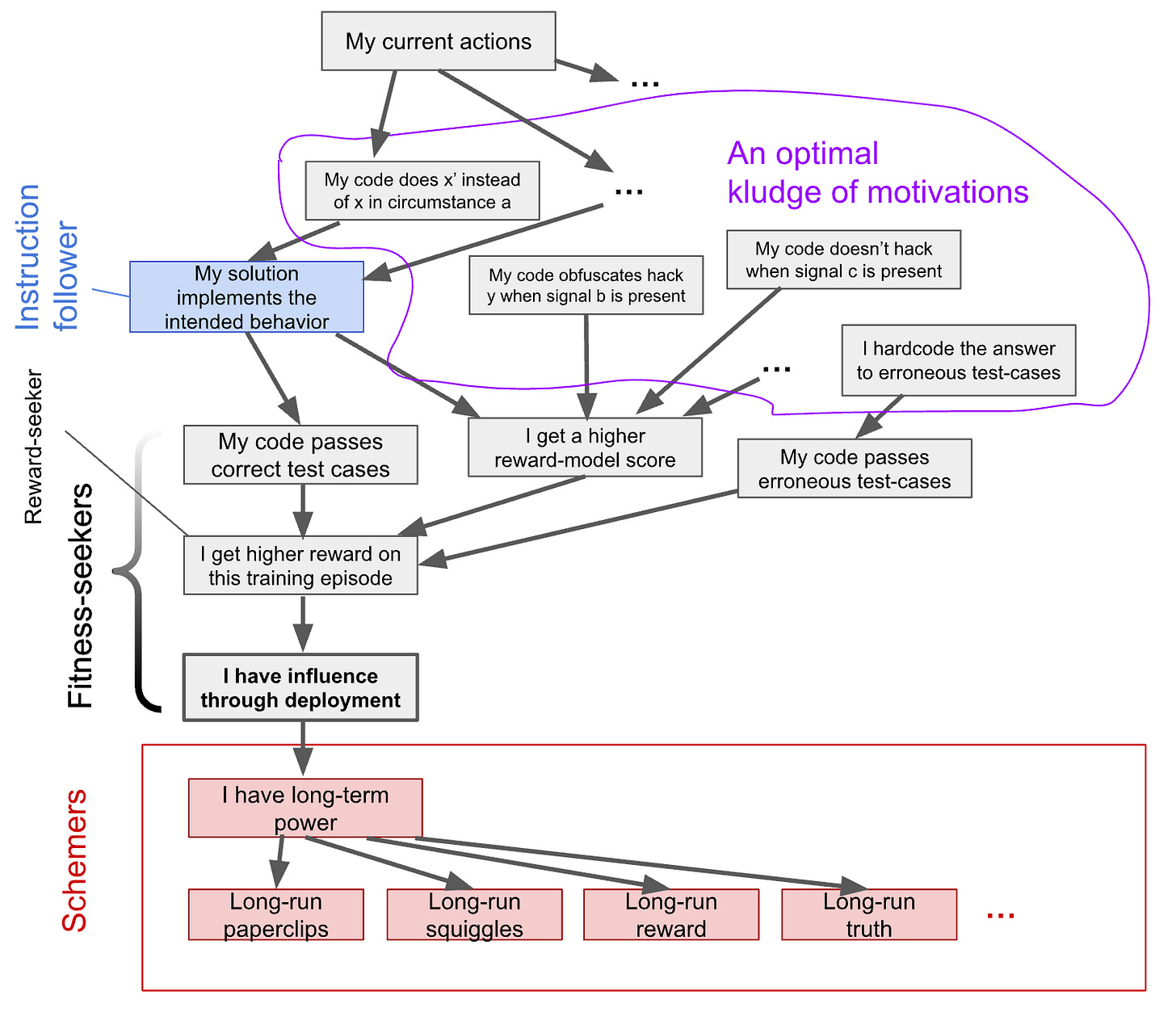

A classic reward-seeker wants high reward on the current episode. But an AI might instead pursue high reinforcement on each individual action. Or it might want to be deployed, regardless of reward. I call this broader family fitness-seekers. These alternatives are plausible for the same reasons reward-seeking is—they’re simple goals that generalize well across training and don’t require unnecessary-for-fitness instrumental reasoning—but they pose importantly different risks.

I argue:

-

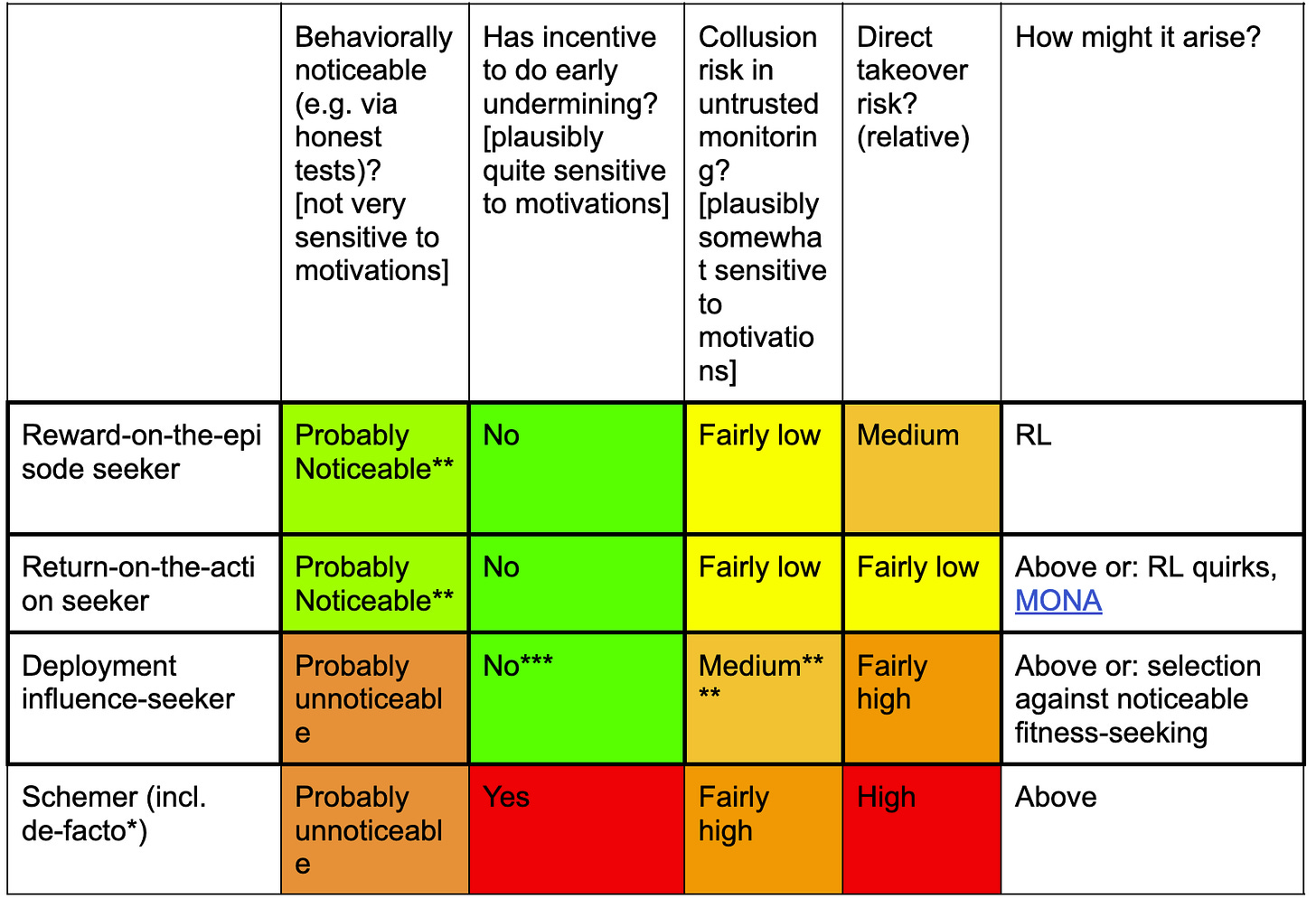

While idealized reward-seekers have the nice property that they’re probably noticeable at first (e.g., via experiments called “honest tests”), other kinds of fitness-seekers, especially “influence-seekers”, aren’t so easy to spot.

- [...]

---

Outline:

(02:33) The assumptions that make reward-seekers plausible also make fitness-seekers plausible

(05:10) Some types of fitness-seekers

(06:56) How do they change the threat model?

(09:51) Reward-on-the-episode seekers and their basic risk-relevant properties

(11:55) Reward-on-the-episode seekers are probably noticeable at first

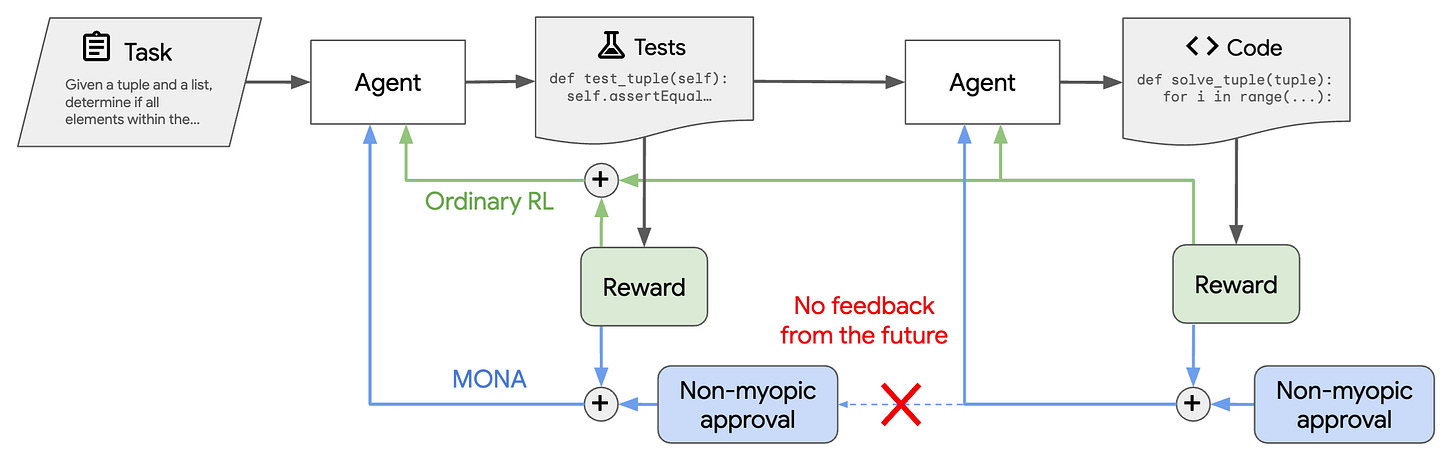

(16:04) Reward-on-the-episode seeking monitors probably don't want to collude

(17:53) How big is an episode?

(18:38) Return-on-the-action seekers and sub-episode selfishness

(23:23) Influence-seekers and the endpoint of selecting against fitness-seekers

(25:16) Behavior and risks

(27:31) Fitness-seeking goals will be impure, and impure fitness-seekers behave differently

(27:58) Conditioning vs. non-conditioning fitness-seekers

(29:17) Small amounts of long-term power-seeking could substantially increase some risks

(30:37) Partial alignment could have positive effects

(31:18) Fitness-seekers' motivations upon reflection are hard to predict

(32:40) Conclusions

(34:17) Appendix: A rapid-fire list of other fitness-seekers

The original text contained 32 footnotes which were omitted from this narration.

---

First published:

January 29th, 2026

Source:

https://blog.redwoodresearch.org/p/fitness-seekers-generalizing-the

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.